The Entrenchment Move

Competence anxiety and the politics of staying central

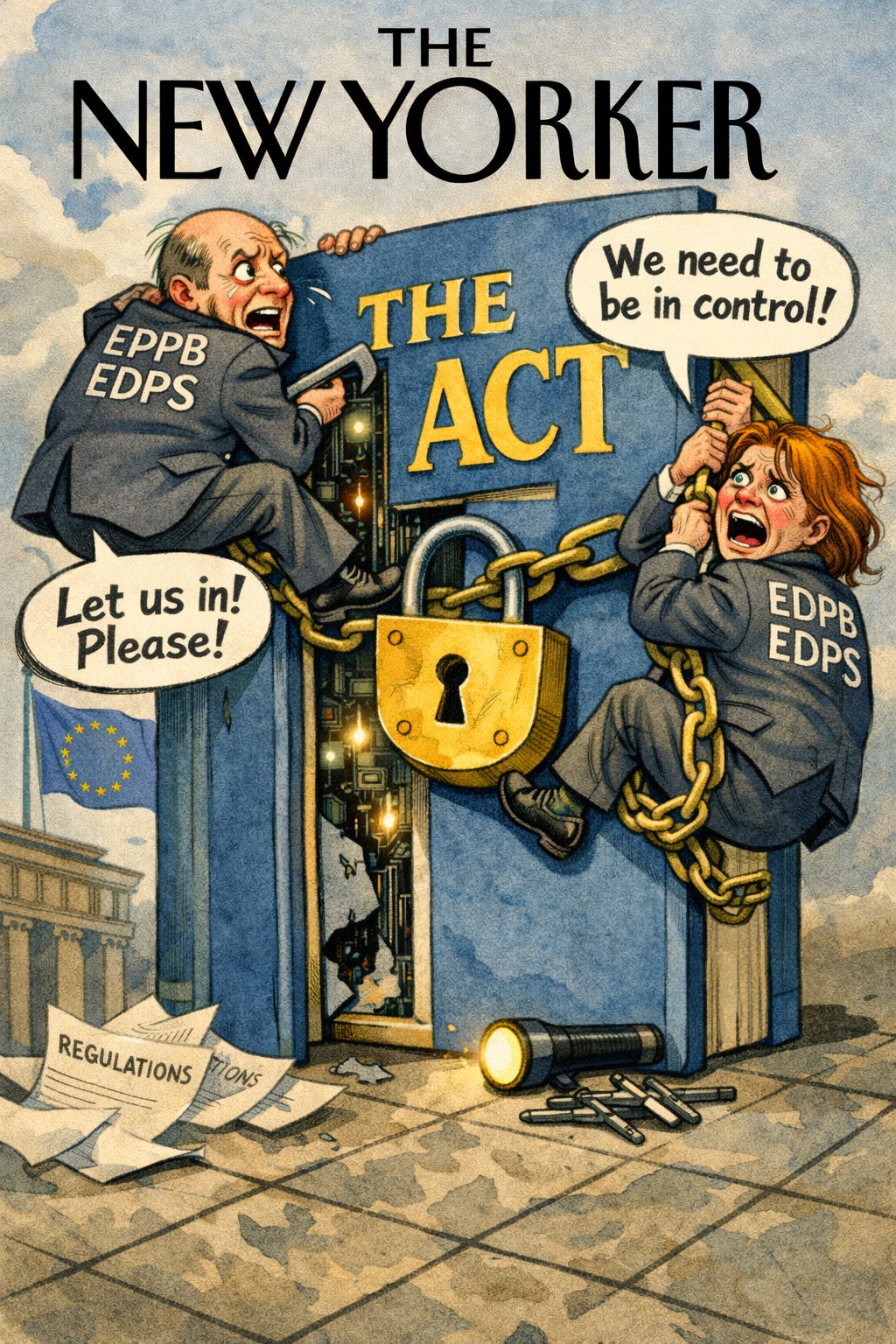

In my first post in this series, I argued that the Digital Omnibus is not a tidy exercise in legislative housekeeping, but a fault line that exposes a deeper struggle over what kind of regulatory project EU AI law is meant to be. If that diagnosis is right, then the response to the Omnibus matters more than the Omnibus itself. This second post starts from that premise and turns directly to the EDPB and EDPS Joint Opinion on the Digital Omnibus on AI. Read charitably, it presents itself as a sober warning against deregulation by stealth. Read closely and critically, it does something far more consequential. It reveals an institution under pressure, facing the slow redistribution of competence brought about by the AI Act, and responding by hardening its claims to indispensability. What follows in the Joint Opinion is not a neutral technical critique, but a strategic effort to reassert gravitational pull. Across barely ten pages of substantive text, the Opinion invokes variations of the word “competent” an astounding thirty-three times, a density that speaks for itself. The effect is to ensure that even as AI governance is formally redirected toward product safety, centralised supervision, and market logic, nothing of consequence can occur without data protection authorities being designated as the relevant competent actors, present in every process, embedded in every mechanism, and ultimately positioned to retain control.

“If you have to keep telling people you’re king, you’re not really king.”, Tywin Lannister, Game of Thrones

Therefore, the EDPB and EDPS Joint Opinion 1/2026 should be read not as a defence of data protection as such, but as a regulatory counteroffensive. Rather than responding to the Commission’s proposals on their own terms, it recasts targeted simplifications as existential threats to fundamental rights, thereby shifting the terrain of debate from implementation to constitutional principle. In doing so, the Opinion deploys the language of rights not primarily to protect individuals, but to reassert institutional centrality, supervisory reach, and interpretive authority within the emerging AI governance framework. It is a carefully constructed exercise in reconstitutionalisation, one that treats any recalibration of competence or enforcement logic as a rights regression, and uses that framing to reclaim administrative territory that the Omnibus implicitly places elsewhere.

The “Gravitational Field” of Data Protection

The core claim running through this post’s analysis is that the EDPB seeks to keep the AI Act within the gravitational pull of data protection constitutionalism. That position is reflected throughout the Joint Opinion. Simplification is repeatedly treated as acceptable only insofar as it does not alter the level of protection afforded to fundamental rights. The practical consequence is that GDPR concepts, thresholds, and supervisory priorities are treated as the default reference point whenever the AI Act introduces a different regulatory calibration.

On this reading, the AI Act is not approached as an autonomous regulatory regime with its own logic of risk management and enforcement, but as a subsidiary framework whose operation remains contingent on continued alignment with GDPR doctrine. This can be seen in the insistence that data protection authorities (hereafter DPAs) be formally involved in EU-level sandboxes, that standards of strict necessity be preserved even where the legislature has opted for a broader formulation, and that transparency obligations retain priority irrespective of technical readiness. Across these positions, the GDPR serves as the controlling framework, with the AI Act operating in a subordinate role whenever personal data processing is implicated, which, in the context of AI systems, is almost always the case (but also not as much as one might think).

The Strategy of “Normative Overhang”

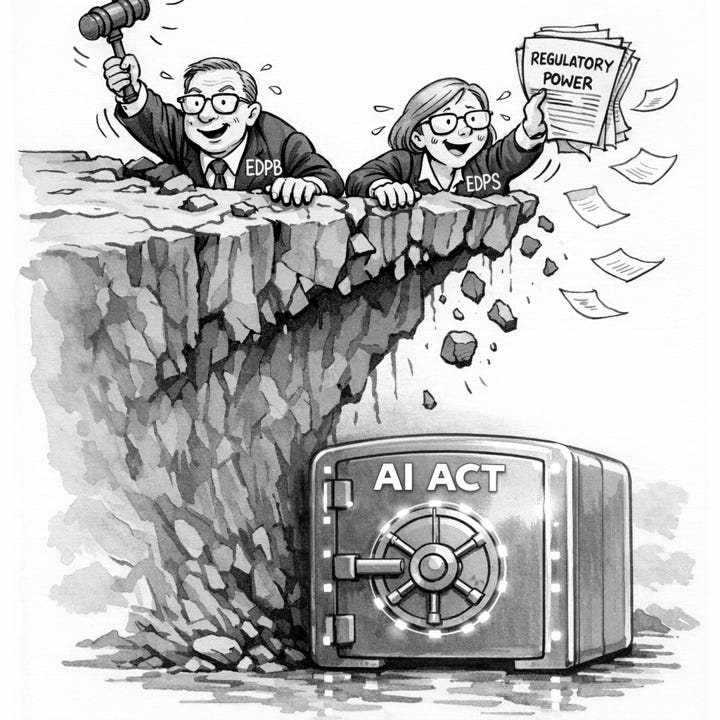

By insisting that the AI Act reproduce the GDPR’s level of doctrinal density and rights granularity, the EDPB engineers a condition of normative overhang. The AI Act’s institutional design is anchored in product safety logic: ex-ante risk classification, conformity assessment, technical documentation, and post-market surveillance carried out by specialised authorities. The Joint Opinion nonetheless reads these instruments through a fundamentally different lens, treating them as vehicles for the continuous vindication of individual fundamental rights.

In doing so, it assigns to the AI Office and market surveillance authorities tasks they are neither conceptually nor procedurally equipped to perform, such as assessing proportionality, necessity, or abstract rights impacts at the level of individual data subjects. That mismatch is not incidental. Once product safety tools are declared insufficient to discharge these demands, the conclusion follows that only data protection authorities possess the requisite competence.

The Joint Opinion invokes “competent” and “competence” thirty-three times. This does not resolve uncertainty about enforcement. It reveals it. The lady doth protest too much, because what is really being defended is institutional power.

The effect is to justify an expanded supervisory remit across the AI lifecycle, not because the legislature has conferred it, but because no other authority can meet standards imported from an adjacent constitutional framework. The result is a systematic recharacterisation of the AI Act as an extension of data protection law rather than a parallel regime, transforming it, in functional terms, into “GDPR Plus”.

Recasting Rights as Jurisdiction: How the Joint Opinion Converts Protection into Power

The EDPB’s rhetoric relies on a specific trick: they treat “fundamental rights” and “data protection compliance” as synonyms. By equating the broad protection of rights (which the AI Act aims to achieve through safety) with the specific procedural rituals of the GDPR (DPIAs, strict necessity), they delegitimise the AI Act’s own mechanisms. What is striking, when the Joint Opinion is read on its own terms, is how openly it converts the language of safeguards into a doctrine of institutional indispensability. Again and again, the EDPB and EDPS insist that any adjustment that reduces their direct visibility, anticipatory reach, or procedural leverage must be resisted, as it would significantly decrease accountability or undermine the protection of fundamental rights.

But their own text shows that what is really at stake is not the level of protection, but who gets to operationalise it. They argue, for example, that providers who lawfully rely on Article 6(3) AI Act must still publicly register their systems because this allows the public, DPAs, and fundamental rights bodies to intervene before market placement, explicitly invoking reputational pressure and early enforcement as virtues of the regime. That is not a safety argument. It is a claim for permanent upstream surveillance that clearly would chill innovation. Likewise, in relation to bias mitigation, they stress that DPAs would first and foremost be competent to supervise any processing of special category data, even where the AI Act creates a specific, self-contained derogation designed to facilitate compliance. On sandboxes, they accept the innovation rationale in principle, only to insist that competent DPAs must be “associated” with EU-level sandboxes, that their GDPR cooperation mechanisms must remain fully intact, and that the EDPB itself should acquire an advisory role and observer status on the AI Board.

This is competence expansion by accumulation, not coordination.

Even where the Omnibus grants the AI Office exclusive competence over general-purpose AI, the Joint Opinion immediately conditions this exclusivity on constant coordination with DPAs whenever privacy or data protection risks are present, a category so broad that it collapses exclusivity in practice. The most revealing passage comes where they warn that MSAs must not be allowed to assess the necessity or proportionality of requests by fundamental rights bodies, lest this “affect the independence and powers of DPAs”.

The concern is not duplication, delay, or over-enforcement, but dilution of authority. Taken together, the Joint Opinion reads less like a proportionality check and more like a reassertion of primacy. Fundamental rights are treated as synonymous with GDPR supervision, and GDPR supervision is treated as something that must remain omnipresent, even within a regulation deliberately designed to shift enforcement logic toward product safety, ex post surveillance, and centralised competence. This is not about preventing a race to the bottom; rather, it is about preventing a redistribution of power.

The EDPB’s Defence: Registration as Rights Infrastructure

The EDPB and EDPS reject outright the Commission’s proposal to remove the obligation to register AI systems that are formally listed in Annexe III but, under Article 6(3) of the AI Act, assessed by the provider as not high risk. In their view, allowing such systems to remain unregistered would “significantly decrease accountability” and create an “undesirable incentive” for providers to rely on the exemption. What matters here is not the exemption itself, which the Joint Opinion accepts in principle, but the refusal to treat it as a genuine carve-out. Registration is defended not as a technical necessity for market surveillance, but as a mechanism to preserve public visibility and early intervention. The Opinion is explicit that registration enables DPAs and fundamental rights bodies to identify systems before market placement, request documentation, and initiate scrutiny at an anticipatory stage, with reputational exposure framed as a legitimate regulatory tool rather than a collateral effect.

Once framed this way, the disagreement is no longer about administrative burden. It is about whether a lawful exemption can operate without continuous upstream disclosure. The Commission’s proposal aligns the AI Act with standard product safety practice, where compliance is presumed, documentation is held on file, and enforcement is triggered by evidence of risk. The EDPB’s response rejects that logic. By insisting that exempt systems must still be publicly registered, the Joint Opinion transforms Article 6(3) from an exemption into a provisional status, one that remains subject to permanent visibility and contestation. This is not a dispute about safety thresholds. It is a refusal to relinquish a surveillance infrastructure that keeps data protection authorities positioned upstream of innovation decisions.

The Registry of Innocence

Taken seriously, this logic produces a perverse outcome. Providers who rely on a lawful derogation must publicly announce that reliance, justify it in advance, and accept reputational risk as the price of using an exemption expressly provided by the legislature. The effect is a registry not of danger, but of asserted safety. A provider must effectively declare “this system is not high-risk” in order to prove that it deserves not to be treated as such. That is not how product safety law normally operates. In the New Legislative Framework, compliance is presumed unless evidence suggests otherwise. Enforcement is triggered by risk, not by the absence of risk.

The Joint Opinion dismisses the Commission’s own impact assessment on this point, noting that the administrative savings are modest. But that misses the structural issue. The question is not whether registration saves a few hundred euros per firm. The question is whether the regulatory architecture should incentivise providers to over-classify systems as high-risk simply to avoid public scrutiny and contestation. Faced with the choice between quiet internal documentation and public exposure to challenge, many providers will rationally opt into the high-risk category, flooding the system with compliance noise and undermining the very risk prioritisation the AI Act is meant to achieve.

Surveillance Logic Disguised as Accountability

What the registration debate ultimately exposes is a clash between two regulatory philosophies. The Commission’s approach treats Article 6(3) as a genuine exemption, policed through ex post market surveillance and sanctions for abuse. The EDPB’s approach treats the exemption as inherently suspect and insists on compensating for it through continuous visibility and early intervention. Accountability is equated with being seen, rather than with being demonstrably safe. In this sense, the defence of registration is not about aligning obligations with risk. It is about preserving a surveillance infrastructure that allows data protection authorities and allied bodies to remain upstream of innovation decisions.

Seen in that light, the resistance to deleting the registration obligation is entirely consistent with the broader pattern identified above. It is another instance in which product safety logic is rejected in favour of procedural exposure, not because the latter manages risk better, but because it sustains supervisory reach. The database becomes less a tool of market regulation and more a standing invitation to contestation. This is not transparency in the service of safety. It is transparency as a mode of control.

Ultimately, the EDPB is arguing that without registration, there is an “undesirable incentive” to cheat. But in product safety law, the deterrent against cheating is post-market surveillance (heavy fines, recalls), not pre-market confession. The EDPB’s distrust of the ex-post enforcement model has led them to demand a surveillance infrastructure (the database) that covers even safe products. The Commission’s removal of this requirement is a correct alignment with the logic of Article 114 TFEU: We regulate risks, not the absence of risks.

In the next post, I turn to the point where this logic does its most tangible damage: regulatory sandboxes. What are presented, in the AI Act, as spaces for supervised experimentation and learning are reimagined in the Joint Opinion as controlled derogations from rights protection, requiring constant oversight, preserved enforcement powers, and institutional co-governance. The result is a concept that looks like a sandbox in name only, but functions as a pre-compliance audit with the threat of sanctions never far away. That shift matters because it exposes the deeper incompatibility between an innovation regime built on iteration and failure and a supervisory mindset that treats any relaxation of control as a constitutional risk.

Really interesting post.

On the one hand, AI development, and even deployment, is heavily dependent on data and therefore, to the extent that that data constitutes personal data, then the GDPR applies to its use. So data protection does have a big role to play when it comes to AI regulation.

At the same time, however, data protection, whilst I do consider it a tool for ensuring many different types of rights, is not adequate for everything, including when it comes to the comprehensive regulation of AI. The nudification chaos with Grok comes to mind here; are there grounds for finding violations of data protection law in this case? I know the ICO in the UK has said it has started an investigation, but what would xAI have infringed here? Fairness? Accuracy? Security? Not sure.